There is a widening gap between developers who get reliable output from Claude Code and developers who spend half their day undoing what the agent just built. The difference is not talent, experience, or some secret prompt engineering trick. It is a methodology question. The developers shipping production software with AI agents have converged on a pattern, whether they call it that or not: define what you want before the agent starts writing code.

This article gives that pattern a name. Spec-first development is a methodology for AI-assisted software engineering. Not a vague "best practice." A structured, repeatable lifecycle with defined phases, clear checkpoints, and concrete artifacts at every step. If you have been searching for a way to make Claude Code output predictable enough to bet your release schedule on, this is the framework.

The Vibe Coding Ceiling

"Vibe coding" entered the vocabulary in early 2025. The pitch: describe what you want in natural language, let the AI write it, iterate until it looks right. For prototypes, weekend projects, and one-off scripts, vibe coding works. You get something functional fast, and if it breaks later, the stakes are low.

Production software operates under different constraints. The code must integrate with an existing codebase, satisfy specific requirements, and survive contact with other people who will maintain it. When vibe coding meets these constraints, the failure modes are predictable.

The first failure is drift. You describe a feature loosely, the agent implements its interpretation, you adjust, the agent reimplements its adjusted interpretation. Three iterations later, you have working code that satisfies none of your original requirements because each iteration shifted the target. You are converging on what the agent thinks you want, not on what you actually need.

The second failure is invisible decisions. Every gap in your description is a decision the agent makes silently. Database schema, error handling strategy, API shape, validation rules, library choices. You discover these decisions during code review, or worse, in production. The agent did not make bad decisions. It made uninstructed decisions, and you had no mechanism to catch them before they were baked into the implementation.

The third failure is review paralysis. A 600-line diff where the agent chose the architecture, the data model, the error codes, and the edge case handling is not reviewable in the traditional sense. You are not reviewing code against a spec. You are reverse-engineering the spec from the code, then deciding whether you agree with it. This takes longer than writing the spec would have.

Vibe coding hits a ceiling because it conflates two distinct activities: deciding what to build and building it. Spec-first development separates them.

Spec-First as a Methodology

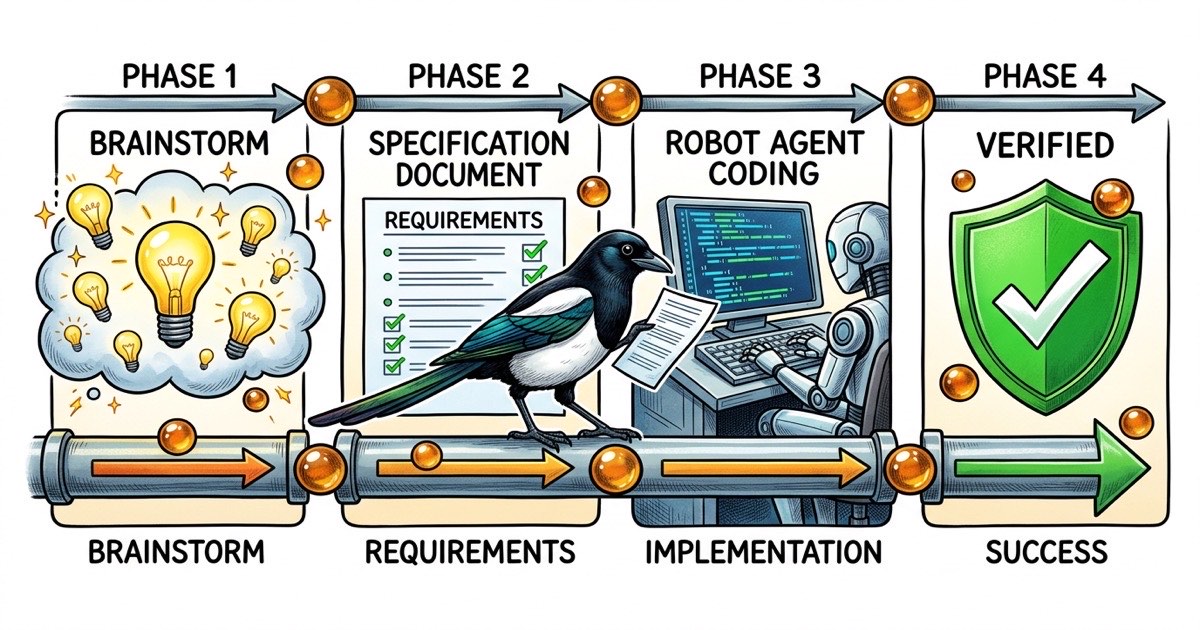

Spec-first development is a four-phase lifecycle. Each phase produces a concrete artifact. Each transition has a clear gate condition. The methodology works with any AI coding agent, but the examples in this article use Claude Code because that is where the community is iterating fastest.

Phase 1: Brainstorm

You and the agent (or just you) explore the problem space. What are the constraints? What approaches exist? What are the tradeoffs? This is conversational. You are not committing to anything. You are mapping the territory.

The gate condition: you have a preferred approach and you can articulate why this approach over the alternatives.

Brainstorming with Claude Code is valuable because the agent has broad knowledge of patterns and libraries. The mistake is jumping from brainstorm directly to code. The brainstorm surfaces options. It does not choose among them. You do.

Phase 2: Spec

You write down the decision. This is the contract the agent will implement against. A spec is not a user story, not a Jira ticket, not a paragraph of prose. It is a structured document with:

- Problem statement: what is broken or missing, in concrete terms

- Proposed approach: the chosen solution from the brainstorm phase

- Files affected: which files the agent should touch (and implicitly, which it should not)

- Acceptance criteria: testable conditions that define "done"

- Out of scope: what the agent should explicitly avoid

The acceptance criteria are the most important element. Each one must be a concrete action with an observable outcome. "Authentication should work" is not a criterion. "Submitting valid credentials returns a 200 with a session token; submitting invalid credentials returns a 401 with no token" is.

The out-of-scope section prevents gold-plating. Without it, agents will "improve" adjacent code, refactor files they noticed were messy, or add features that seem related. Every minute the agent spends on unrequested work is a minute you spend reviewing unrequested work.

The gate condition: someone who was not in the brainstorm could read this spec and build the right thing.

Phase 3: Implement

The agent executes against the spec. Not against a conversation. Not against a memory of what you discussed. Against a concrete document with testable criteria.

Before writing any code, the agent produces a plan: a numbered list of changes it intends to make, which files it will modify, and how it will verify the result. This plan is a two-minute checkpoint. You read it, confirm it matches your intent, and green-light the implementation. Or you catch a misunderstanding and correct it. Either way, you have spent two minutes instead of twenty.

The plan-before-code pattern is not bureaucracy. It is the single highest-leverage intervention in the entire workflow. Most implementation mistakes are not coding errors. They are comprehension errors: the agent misunderstood the spec. Catching a comprehension error in a plan costs two minutes. Catching it in a 400-line diff costs twenty. Catching it in production costs a day.

The gate condition: the agent has posted a completion report with specific claims about what was built and how it was verified.

Phase 4: Verify

You or a QA process confirm the implementation against the spec. Not "does it look right?" but "does it satisfy each acceptance criterion?"

Verification is mechanical. You take each criterion from the spec, execute the test (run a command, open a browser, trigger an event), and record the result: pass or fail. Criteria that fail go back to Phase 3. The verification is documented alongside the implementation so that anyone who reads the task six months from now can see exactly what was tested.

The gate condition: every acceptance criterion has a recorded pass/fail result.

That is the complete lifecycle. Four phases, four artifacts (approach rationale, spec, implementation plan, verification record), four gate conditions. The phases are sequential but lightweight. For a medium-sized feature, phases 1 and 2 take 15-20 minutes. Phase 3 takes whatever the implementation takes. Phase 4 takes 5-10 minutes.

Why This Matters More with Agents Than Humans

Every argument for writing specs predates AI. "Write requirements before code" has been advice since before most of us were born. So why frame this as something specific to AI-assisted development?

Because agents change the cost function.

A human developer who receives a vague requirement will stop and ask questions. "Did you mean password auth or SSO?" "Should this work on mobile?" "What happens when the token expires?" Every question is a mini-checkpoint that nudges the implementation toward the correct target. The cost of a vague spec with a human developer is a few Slack threads and maybe an afternoon of rework.

An agent who receives a vague requirement will not stop. It will make every ambiguous decision silently, commit to an approach, and present you with a finished implementation. The cost of a vague spec with an agent is a finished implementation that may be entirely wrong, plus the time you spend discovering it is wrong, plus the time you spend redoing it.

The asymmetry is stark. Agents are faster at execution and worse at judgment than human developers. Every ambiguity in the spec is a judgment call, and every judgment call the agent makes without guidance is a coin flip on whether the result matches your intent. A spec eliminates coin flips.

There is a second, subtler reason. Agents do not push back. A senior engineer who receives a bad spec will say "this doesn't make sense because X." An agent will implement a bad spec faithfully and produce faithfully wrong output. Spec-first development forces you to pressure-test your own thinking before handing it to an entity that will execute it without question. The spec is not just for the agent. It is for you.