You have a feature that needs three things done at once. You could spawn subagents inside a single Claude Code session and let them work in parallel. Or you could split the work into three independent tasks, hand each to a separate agent in its own tmux pane, and let them run without knowing about each other.

Both approaches parallelize work. They solve different problems. Pick the wrong one and you'll either burn context window on coordination overhead or create merge conflicts that take longer to fix than the original task.

This is the decision framework I use every day while running 13 Claude Code agents on the same codebase. It's not theoretical. It's the result of getting it wrong enough times to know where the boundary sits.

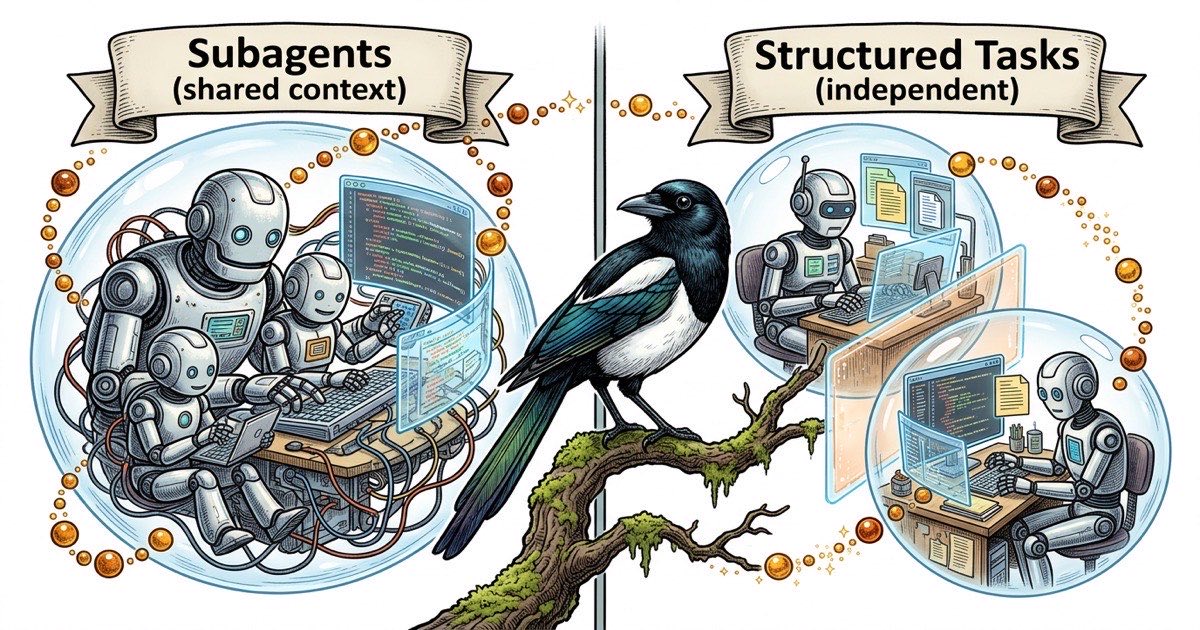

Two Kinds of Parallelism

Claude Code supports two distinct ways to run work concurrently.

Subagents are child processes spawned within a single Claude Code session. The parent agent kicks off multiple subagents, each tackling a piece of the problem, then collects their results. They share the same working directory and the parent's context. Think of them as threads in a single process.

Parent agent

├── Subagent A: "Search lib/ for all usages of parseConfig"

├── Subagent B: "Search server/ for all usages of parseConfig"

└── Subagent C: "Search components/ for all usages of parseConfig"

Separate agents run in independent Claude Code sessions, typically in separate tmux panes. Each has its own context window, its own CLAUDE.md identity file, and its own view of the codebase. They don't share memory. They communicate through artifacts: task comments, status updates, committed code. Think of them as separate processes with no shared state.

tmux pane 1: eng1 agent → "Build the REST endpoint"

tmux pane 2: eng2 agent → "Build the React component"

tmux pane 3: qa1 agent → "Write integration tests for both"

The mental model matters because it determines how work flows between units. Subagents can pass data back to the parent cheaply. Separate agents pass data through the filesystem, git, or an external task tracker.

When Subagents Win

Use subagents when the work shares state and the results need to converge.

Parallel research. You need to search five directories for a pattern, read three documentation files, and synthesize the findings into one recommendation. Subagents can each take a search path, return results, and the parent can combine them without any serialization overhead.

Independent transformations on the same data. You're refactoring a module and need to update the type definitions, the tests, and the documentation in one coherent change. Each subagent handles one file, but the parent ensures the changes are consistent because it sees all three results before committing.

Fast exploration. You're debugging and need to check the git log, the test output, and the runtime config simultaneously. Subagents can gather all three in parallel and the parent synthesizes a diagnosis.

The pattern: fan out, gather back, act on the combined result. If your parallelism ends with the parent needing to reason about all the outputs together, subagents are the right tool.

What subagents are bad at: anything that takes more than a few minutes per branch, anything that modifies files in overlapping paths, or anything that needs independent verification. Subagents share a working directory, so two subagents writing to the same file will corrupt each other's work. And because they share context, a long-running subagent eats into the parent's available window.

When Separate Agents Win

Use separate agents when the work can be verified independently and doesn't need a shared context to make sense.

Different components of the same feature. "Build the API endpoint" and "Build the frontend that calls it" are independent until integration. The API engineer doesn't need the React component in context. The frontend engineer doesn't need the database schema. Giving each its own agent with a scoped CLAUDE.md keeps context clean and prevents one agent's complexity from bleeding into the other's work.

Different acceptance criteria. If task A is done when the endpoint returns 200 with the correct JSON shape, and task B is done when the component renders the data with proper error states, those are separate verification targets. A QA agent can validate each independently. Subagents can't be independently QA'd because they produce one combined output.

Work that touches different parts of the codebase. File ownership is the simplest way to prevent merge conflicts. Agent A owns server/, Agent B owns components/. Neither reaches into the other's territory. If you tried this with subagents, you'd need the parent to manage file locking, which defeats the purpose of parallelism.

Tasks with different time horizons. One task takes 10 minutes, the other takes 2 hours. With subagents, the parent waits for the slowest child. With separate agents, the short task completes, gets verified, and ships while the long task is still running.

The pattern: fire, forget, verify separately. If each piece of work stands alone and can be checked alone, separate agents with structured tasks are cleaner.

The Handoff Problem

The real decision point comes down to handoffs.

Subagent handoffs are cheap. The child returns data to the parent in the same context. No serialization, no file writes, no waiting for a status update. The parent spawns three subagents, they return three results, the parent has everything it needs.

Separate agent handoffs are expensive but durable. Agent A completes work, commits code, updates a task status, and comments what it did. Agent B picks up that signal (either through a coordinator or by polling the task tracker) and starts its dependent work. The overhead is real: you need a task system, a status protocol, and some way for agents to discover what other agents have done.

Here's the rule of thumb: if the work requires more than one handoff between the parallel units, use subagents. If it's a single fan-out-and-gather, subagents are simpler. If Agent A's output is Agent B's input, which becomes Agent C's input, the coordination cost of separate agents is justified because each handoff produces a verified, committed artifact that won't be lost if an agent crashes or hits a context limit.

A concrete example. You need to:

- Find all API endpoints that return user data

- Add rate limiting to each one

- Write tests for the new rate limits

- Update the API documentation

Steps 1 and 2 are tightly coupled. The search results (step 1) feed directly into the modification (step 2). A subagent handles the search; the parent applies the changes. That's a subagent pattern.

Steps 3 and 4 are independent of each other but depend on step 2. The tests need the actual endpoint code. The docs need the final API shape. These are separate tasks for separate agents, each with its own acceptance criteria, each verifiable on its own.