# Beadbox

> Beadbox is a native desktop GUI for the beads issue tracker. It gives developers a visual view of the work their AI coding agents are doing: dependency graphs, epic progress trees, and real-time updates across an agent fleet. Built on Tauri (not Electron). macOS, Linux, and Windows.

Beadbox is the GUI. The underlying issue tracker is **beads** (the `bd` CLI), created by Steve Yegge: https://github.com/steveyegge/beads. Beads stores every issue ("bead") in a local Dolt database — a SQL database with Git-like version control — so issue data is local-first and never leaves the machine unless the user pushes to a Dolt remote.

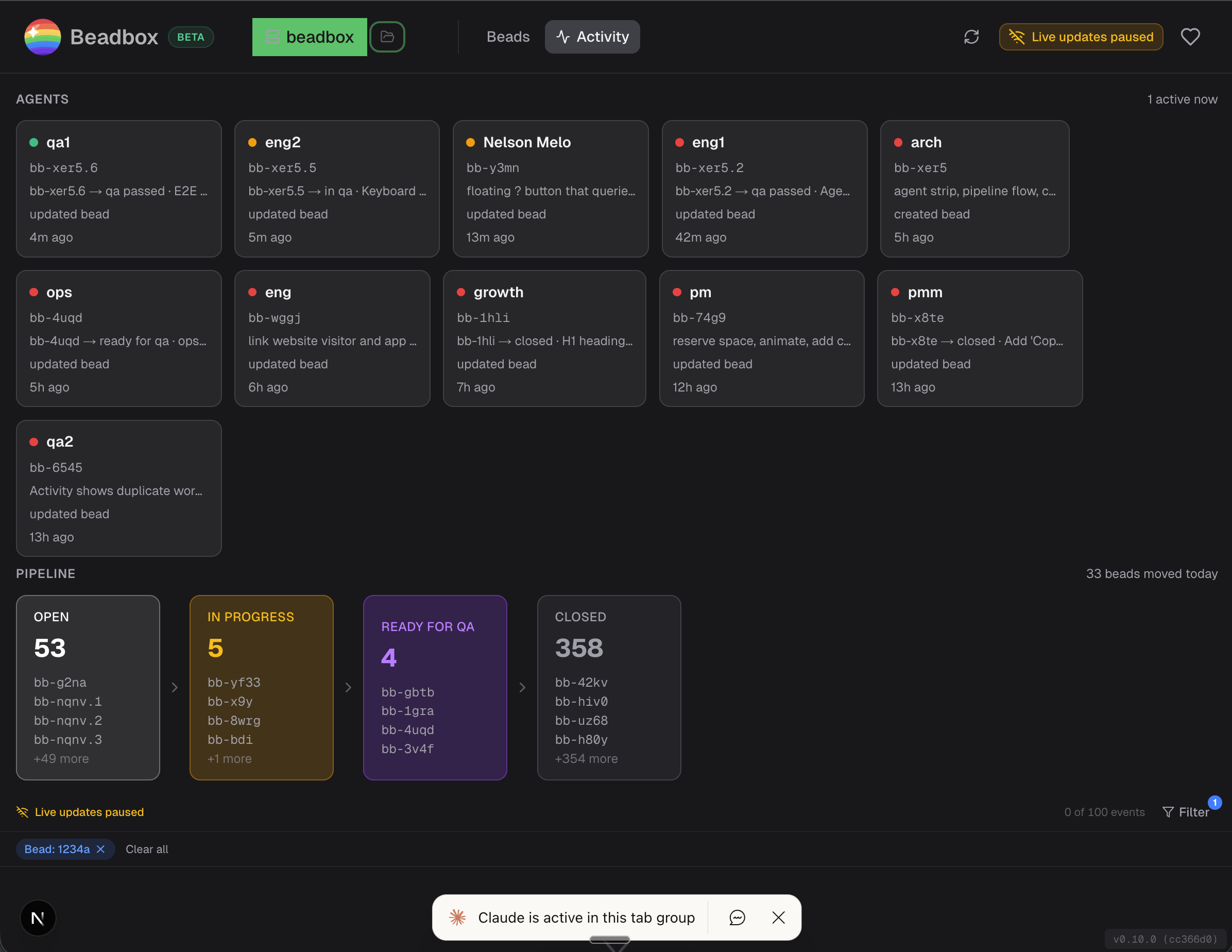

Typical user: a developer running one or more AI coding agents (Claude Code, Cursor, etc.) who needs a real-time view of what the agent fleet is doing, where work is blocked, and how epics are progressing. The CLI (`bd`) is faster for creating and closing single issues; Beadbox is for seeing the whole picture.

## Install

- Homebrew (macOS): `brew install --cask beadbox/beadbox/beadbox`

- Requires the beads CLI first: `brew install beads`

- All downloads: https://beadbox.app/en/#download

## Product pages

- [Homepage](https://beadbox.app/en): Product overview, hero, features.

- [Beads GUI](https://beadbox.app/en/beads-gui): What Beadbox shows that the CLI can't. Dependency graphs, epic progress, live updates.

- [Beads Dashboard](https://beadbox.app/en/beads-dashboard): Real-time fleet view for teams running multiple AI coding agents.

- [Local-First Issue Tracker](https://beadbox.app/en/local-first-issue-tracker): Privacy and Dolt-backed positioning. No cloud, no accounts, no telemetry on issue data.

## Documentation

- [Getting Started](https://beadbox.app/en/docs/getting-started): Install the bd CLI and Beadbox, open your first workspace. Full quickstart.

- [Core Concepts](https://beadbox.app/en/docs/concepts): The beads data model — workspaces, beads, epics, dependencies, comments.

- [Agent Setup](https://beadbox.app/en/docs/agent-setup): Wiring AI coding agents (Claude Code, etc.) to bd so they create and update issues.

- [Custom Statuses](https://beadbox.app/en/docs/custom-statuses): Define workflow states beyond the five built-in statuses (open, in_progress, blocked, deferred, closed).

- [Keyboard Shortcuts](https://beadbox.app/en/docs/keyboard-shortcuts): Full keyboard-driven navigation reference.

- [Connecting to a Dolt Server](https://beadbox.app/en/docs/connecting-dolt-server): Multi-writer setup against a shared Dolt SQL server.

## Core blog posts

- [Why We Built Beadbox](https://beadbox.app/en/blog/why-we-built-beadbox): Motivation and origin story.

- [I Ship Software with 13 AI Agents](https://beadbox.app/en/blog/coding-with-13-agents): Concrete description of a multi-agent development workflow that depends on beads for coordination.

- [Why Project Management Tools Don't Work for AI Agents](https://beadbox.app/en/blog/why-project-management-tools-dont-work-for-ai-agents): Argument for why Jira/Linear/GitHub Issues fall short when agents are the primary writers.

- [Visualizing Dependencies Between AI Agents in Real Time](https://beadbox.app/en/blog/visualizing-dependencies-between-ai-agents-real-time): How Beadbox's dependency graph surfaces blockers across a fleet.

## Policies

- [Privacy Policy](https://beadbox.app/en/privacy): What data Beadbox and beadbox.app collect (app-usage analytics only; never issue content).

- [Terms](https://beadbox.app/en/terms): Terms of use.

## Optional

- [Local-First Issue Tracking with Dolt](https://beadbox.app/en/blog/local-first-issue-tracking-dolt-cli-integration): Deep dive on why Dolt is the right backing store for a local-first issue tracker.

- [Spec-Driven Development with Claude Code](https://beadbox.app/en/blog/spec-driven-development-claude-code): Workflow for writing specs before code when driving agents through beads.

- [How to Manage Tasks for Claude Code Agents](https://beadbox.app/en/blog/manage-tasks-claude-code-agents): Practical guide to using beads as the task queue for Claude Code.

- [Keyboard-Driven Triage & Visual Issue Tracking](https://beadbox.app/en/blog/keyboard-driven-triage-visual-issue-tracking): Power-user triage patterns in the Beadbox UI.

- [Linear Alternatives: Local-First Issue Tracking](https://beadbox.app/en/blog/linear-alternatives-local-first-issue-tracking): Comparison with cloud issue trackers.

- [beads v1: The Hard Way](https://beadbox.app/en/blog/beads-v1-the-hard-way): Engineering notes from the v1 release of the bd CLI.

- [How to Monitor Multiple Claude Code Agents](https://beadbox.app/en/blog/how-to-monitor-multiple-claude-code-agents): Operational guide for running an agent fleet.

- [Triage Blocked Tasks in Parallel Development](https://beadbox.app/en/blog/triage-blocked-tasks-parallel-development): Using the blocked-bead view to unblock agents quickly.

---

# Full blog content

The sections below contain the complete markdown body of every post on the Beadbox blog, ordered newest first. Each section starts with `## {title}`, followed by publication metadata and the post body.

## v0.24.0: Goodbye Standalone Dolt

Published: 2026-04-16 · URL: https://beadbox.app/en/blog/v0-24-0-simplified-install

For a while now, getting Beadbox running meant installing Dolt separately, then the beads CLI, then Beadbox itself, then hoping the versions lined up. It worked, but it was a lot to ask for something that's supposed to just show you your issues. As of v0.24.0, Dolt is bundled into the beads CLI directly. If you have `beads` installed, you have everything you need.

The new flow is three commands: `brew install beads`, then `bd init` in your project, then `brew install --cask beadbox`. That's it. No Homebrew tap for Dolt, no matching versions, no standalone server process to babysit. Under the hood, beads v1.0.1 ships with an embedded Dolt engine that Beadbox connects to through the same CLI you were already using. The bump to BD_MIN_VERSION 1.0.1 is what unlocks this, so older beads installs will see a friendly prompt to upgrade.

If you're on a team that was running a shared Dolt server for sync, nothing changes. Server mode is still there and still works the same way. We just stopped asking solo users to set one up locally when the embedded engine covers what they need. For most people, this is one fewer thing to install, one fewer process in Activity Monitor, and one fewer thing that can drift out of sync.

Upgrade with `brew upgrade beads-ui` or grab a fresh install from [beadbox.app](https://beadbox.app). Release notes live in [the announcement discussion](https://github.com/beadbox/beadbox/discussions/19).

## v0.23.1: Flock Errors, Sidecar Crashes, and a Windows Path Bug

Published: 2026-04-15 · URL: https://beadbox.app/en/blog/v0-23-1-patch-embedded-reliability

If you've been running Beadbox alongside the bd CLI in embedded mode, you've probably seen flock errors. The embedded Dolt backend uses exclusive file locks, and when both Beadbox's polling and your terminal commands compete for the same lock, one of them loses. In v0.23.1, we added a 2-second debounce to Beadbox's change detection and expanded the retry window. The lock contention that caused those errors should be gone.

There was also a failure mode that Windows users noticed first, though it wasn't platform-specific: when the Node.js backend process died mid-session, Beadbox just kept rendering the last known state. No error message, no indication anything was wrong. You'd click around, eventually hit "Failed to fetch," and have no option except restarting the whole app. We added a sidecar health monitor that catches backend crashes and shows a recovery screen with a one-click restart.

[@red-dot-camel](https://github.com/red-dot-camel) filed [#17](https://github.com/beadbox/beadbox/issues/17) with a clear reproduction path for a Windows-specific issue: workspaces living on non-home drives would lose their connection on every tab switch. Detailed enough that we reproduced and patched it the same day. Bug reports like this one save us weeks of guessing.

Upgrade with `brew upgrade beads-ui` or download from [beadbox.app](https://beadbox.app). Full release notes in [the announcement discussion](https://github.com/beadbox/beadbox/discussions/18).

## We Shipped beads v1.0.0 Support. It Took a Rollback, a Flock Bug, and 6 Hotfixes.

Published: 2026-04-03 · URL: https://beadbox.app/en/blog/beads-v1-the-hard-way

On April 2, beads shipped v1.0.0. The headline feature was embedded Dolt: a zero-config backend that runs the database in-process, no separate server to manage. For solo developers, this was the promise of `bd init` and you're done. No ports, no daemons, no configuration.

We started adding support in Beadbox immediately. Six hotfix releases, a public rollback, and a deep dive into bd's source code later, we came out the other side with a resilience layer we probably should have built months ago.

## The morning before everything broke

The day started clean. We'd been running a dead code hunt across the codebase and shipped v0.20.0 with 5,350 lines removed and a 2-second improvement on cold launch. Forty-two beads closed. A good morning.

Then we upgraded bd to 0.63.3, the first release built on beads v1.0.0's embedded Dolt backend.

Beadbox couldn't find the database. Embedded mode stores data in `.beads/embeddeddolt/` instead of `.beads/dolt/`. The database name changed too, from hardcoded `beads` to a project prefix read from `metadata.json`. And `bd sql`, which our WebSocket server used for O(1) change detection via `DOLT_HASHOF_TABLE`, isn't supported in embedded mode at all.

Three assumptions broken in the first ten minutes.

## Six releases in one day

Discover, fix, ship, discover again.

**v0.20.1** added credential persistence using the OS keychain (six beads worth of work already in progress), fixed a custom status filter bug, and patched Windows-specific issues.

**v0.20.2** taught Beadbox to read `dolt_database` from `metadata.json` so it could find the renamed database.

**v0.20.3** added embedded mode guards. Every `bd sql` call got wrapped with a check: if we're in embedded mode, fall back to CLI-based polling instead of direct SQL queries. The `getDoltDir` function learned to look in `embeddeddolt/` first.

**v0.20.4** fixed `--db` path normalization for the embedded layout. Paths that worked with the old directory structure broke with the new one.

Each fix revealed the next problem.

## The flock

After v0.20.4, we thought we were stable. Then we ran a simple concurrency test: five `bd list` calls at the same time.

Four of them failed.

Embedded Dolt acquires an exclusive file lock (flock) on the database for the entire lifetime of every command. From `PersistentPreRun` to `PersistentPostRun`, nothing else can touch it. This is by design. Without it, concurrent engine initialization causes a nil-pointer panic ([beads#2571](https://github.com/steveyegge/beads/issues/2571)). The flock prevents the crash. But it also means that in embedded mode, bd is effectively single-process.

Beadbox is not single-process. Our WebSocket server polls for changes every second. The UI fires multiple server actions on page load. A user clicking through the app while the background poller runs will generate concurrent bd calls. The flock blocks all of them except the first.

The DoltHub blog post about the embedded implementation described the intended behavior: concurrent callers should "queue up naturally with exponential backoff." But arch reviewed the shipped source code and found that bd uses `TryLock` with `LOCK_NB` (non-blocking). It doesn't wait. It fails immediately. There are two lock layers: bd's flock at the top, and Dolt's driver-level backoff underneath. The first layer short-circuits the second. The retry logic exists in the codebase, but it never executes because the flock rejects the connection before Dolt's backoff gets a chance to run.

The fix (shared locks for read operations via `FlockSharedNonBlock`) exists in bd's source. It just isn't wired up yet.

## We rolled back

We could keep shipping hotfixes against a moving target, or pull back and build a proper resilience layer. We pulled back.

All v0.20.x releases came down from the public repo. v0.19.0 went back up as the recommended version. We posted [a discussion](https://github.com/beadbox/beadbox/discussions/10) explaining what happened and what to do, and added a banner to beadbox.app. Thirty minutes from decision to done.

Every hour a broken release stays up is an hour where someone downloads it, hits the flock issue, and blames the product. We'd rather explain a rollback than debug someone else's bad first experience.

## We weren't the only ones

While we were debugging, a beads user named Kevin posted [beads#2938](https://github.com/steveyegge/beads/issues/2938): "Beads feels painful to use." He'd spent 9.5 hours debugging issues that included the exact embedded-to-server confusion we were hitting. The upgrade to v1.0.0 had silently switched his workspace from server mode to embedded mode ([beads#2949](https://github.com/steveyegge/beads/issues/2949)), hiding his existing issues behind a fresh empty database.

9.5 hours. An experienced user, not someone new to the tool. If that's the experience for someone who knows beads well, the problem isn't the user. It's the migration path.

## What we built for v0.21.0

Instead of patching around individual failures, we built a layer that treats lock contention as a normal operating condition.

**Flock retry with exponential backoff.** Every bd CLI call retries up to 5 times, 100ms to 1.6 seconds between attempts. Lives in one place in `lib/bd.ts`, so every command gets it for free. This covers the common case: two calls collide, one waits briefly, both succeed.

**Graceful degradation UI.** Lock contention no longer means an error screen. The app shows stale data with a refresh indicator. If contention persists past 30 seconds, an amber banner explains the situation. When the lock clears, the banner disappears and data refreshes automatically.

**Auto-promote suggestion.** Repeated contention triggers a suggestion to migrate to server mode: backup, reinitialize with `--server`, restore. One click. This is the right answer for anyone running Beadbox alongside other bd consumers, and now the app tells you that instead of making you figure it out.

**Embedded mode detection.** `getDoltDir` checks for `embeddeddolt/` and routes accordingly. `bd sql` calls are guarded. The WebSocket pipeline falls back to CLI-based polling in embedded mode (slower, but respects the single-process constraint).

## What we learned

**Embedded Dolt is single-process by design.** Not a bug. The flock prevents real panics. Any tool consuming a beads workspace concurrently needs to serialize access or run in server mode. For Beadbox, server mode is the right default. Embedded works for light usage with the retry layer absorbing the occasional collision.

**The docs described intent, not implementation.** The DoltHub blog said backoff. The code said `TryLock` with `LOCK_NB`. We spent time assuming concurrent reads should work because the documentation said they would. Reading the source resolved the confusion in minutes. When behavior doesn't match docs, read the code.

**Test concurrency before you ship.** We didn't run concurrent bd calls until after v0.20.4 was public. `for i in {1..5}; do bd list & done; wait` would have caught the flock issue before any release. Five seconds of testing would have saved us a rollback.

**Roll back early.** The instinct to keep pushing forward is strong. You're close, you can see the fix, one more release. But every broken release that stays public is a trust withdrawal you can't easily undo. Pulling back to v0.19.0 gave us room to build the resilience layer properly instead of shipping it in panicked increments.

**Check your environment variables.** We lost hours to `BEADS_DIR` pointing bd at the wrong workspace. bd was discovering a different database than the one Beadbox was monitoring, and the symptoms looked like data corruption. If your bd commands return unexpected results, `env | grep BEADS` before anything else.

## Where things stand

v0.21.0 is out with beads v1.0.0 support, the resilience layer, and credential persistence via the OS keychain. The [release discussion](https://github.com/beadbox/beadbox/discussions/11) has the full details.

If you're on beads v1.0.0 with embedded mode and hitting intermittent failures, v0.21.0's retry layer should handle it. If you're running Beadbox alongside other tools that hit the same workspace, switch to server mode. The auto-promote flow makes it one click.

And if you're Steve or anyone on the beads team reading this: shared flocks for reads would fix the root cause upstream. [beads#2939](https://github.com/steveyegge/beads/issues/2939) (Unix domain sockets) would make local connections cleaner too. We'll keep building around whatever ships.

## Local-First Issue Tracking with Dolt

Published: 2026-03-07 · URL: https://beadbox.app/en/blog/local-first-issue-tracking-dolt-cli-integration

Every issue tracker you've used follows the same pattern. There's a cloud service. It has a web UI. Someone builds a CLI that talks to the cloud API. The CLI is a second-class citizen: slower, less capable, always one API version behind.

Now flip that architecture. Start with the CLI. Make it write to a local database. Make the database version-controlled, with the same branching and merging semantics you use on source code. Then put a native desktop app on top that reads the same database files directly, no API in between.

That's what [beads](https://github.com/steveyegge/beads) and [Beadbox](https://beadbox.app) do. And the reason this architecture exists is AI agents.

## The problem: agents can't click buttons

If you're coordinating a fleet of AI agents (code generators, reviewers, testers, deployers), you need them to create issues, update statuses, and read work queues. They can't authenticate to Jira. They can't navigate Linear's UI. They need a CLI that writes to a local database, fast, with zero network dependencies.

beads is that CLI. It's an open-source, Git-native issue tracker designed for exactly this workflow. The `bd` command creates, updates, lists, and closes issues. Every write lands in a local [Dolt](https://www.dolthub.com/) database inside your repository's `.beads/` directory.

The numbers matter here. `bd create` takes roughly 15ms. `bd list` across 10,000 issues returns in about 200ms. These benchmarks come from the [beads test suite](https://github.com/steveyegge/beads/blob/main/BENCHMARKS.md). When agents are burning through work items in tight loops, milliseconds per operation determine whether your issue tracker keeps up or becomes the bottleneck.

## Why Dolt, not SQLite?

Dolt is a SQL database that implements Git semantics. Every write is a commit. You get `dolt diff` to see what changed between two points. You get `dolt log` for full audit history. You get `dolt branch` and `dolt merge` with the same mental model you already use on code.

For issue tracking, this means your project history has two parallel audit trails: git log for code changes, dolt log for issue changes. You can answer questions like "what did the issue database look like when we tagged v2.1.0?" by checking out that point in Dolt history. You can branch your issue database to experiment with a reorganization, then merge it back or throw it away.

beads removed SQLite support in v0.9.0 and went all-in on Dolt. The version control semantics aren't a nice-to-have; they're the foundation. When twenty agents are writing to the same issue database, you want the ability to diff, branch, and merge that data with the same confidence you have in your source control.

Optional collaboration works through DoltHub. Push your issue database to a remote, pull changes from teammates. Same push/pull workflow as Git, applied to structured data.

## The visual layer: Beadbox

Agents thrive with CLIs. Humans don't, at least not when they need the big picture. Dependency graphs, epic progress trees, blocked-issue chains: these are spatial problems that a terminal can't render well.

Beadbox is a native desktop application built with Tauri (not Electron) that reads the same `.beads/` directory the CLI writes to. There's no import step, no sync process, no API layer. The GUI watches the filesystem via `fs.watch()`, detects Dolt database changes, and broadcasts updates over a local WebSocket. When an agent runs `bd update BEAD-42 --status in_progress`, the status badge changes in Beadbox within milliseconds.

Here's what the workflow looks like in practice:

```bash

# An agent creates an issue

bd create --title "Migrate auth to OIDC" --type task --priority 1

# Another agent claims it

bd update BEAD-42 --claim --actor agent-3

# A human opens Beadbox and sees the full board:

# dependency graphs, epic trees, filter by status/priority/assignee

# No commands needed. Just look.

# The agent finishes and marks it for review

bd update BEAD-42 --status ready_for_qa

# Beadbox updates in real time. The QA agent picks it up.

```

Agents write through the CLI. Humans read through the GUI. Both operate on the same local Dolt database. No reconciliation, no stale caches, no "let me refresh."

Beadbox runs on macOS, Linux, and Windows. It supports multiple workspaces, so you can switch between projects without restarting.

## What "local-first" actually means

The term gets overused. Here's what it means concretely for beads and Beadbox:

**No account.** You don't sign up for anything. Install the CLI, install the app, point it at a directory. Done.

**No cloud dependency.** Everything runs on your filesystem. Your data never leaves your machine unless you explicitly `dolt push` to a remote. Internet goes down? Nothing changes. You keep working.

**No server.** There's no daemon to manage, no Docker container to run. The Dolt database is a directory of files. The CLI reads and writes those files. Beadbox watches those files.

**Optional collaboration.** When you do want to share, push to DoltHub. Your teammates pull. Merge conflicts on issue data resolve the same way they do on code. But this is opt-in, not required.

Compare this to the alternatives. Jira needs a server (or Atlassian Cloud). Linear needs an account and an internet connection. GitHub Issues needs a repository on GitHub's servers. Even self-hosted options like Gitea require running a web service.

beads needs a directory. Beadbox needs that same directory and a double-click.

## Who this is for

If you run AI agents that need to coordinate through a shared work queue, and you want humans to monitor and steer that work visually, this stack was built for your workflow.

If you manage projects solo and want version-controlled issue tracking that lives next to your code, without a cloud account, this stack works for that too.

If you need Jira's enterprise permission model or Linear's collaborative real-time editing across a distributed team, this isn't the right tool. beads is local-first by design. That's a tradeoff, not an oversight.

## Get started

Install the beads CLI from [github.com/steveyegge/beads](https://github.com/steveyegge/beads), then install Beadbox:

```bash

brew tap beadbox/cask && brew install --cask beadbox

```

Initialize a beads database in any project:

```bash

cd your-project

bd init

```

Open Beadbox, point it at the directory, and you're looking at your issue board. No signup. No configuration wizard. No "connect your GitHub account" modal.

Beadbox is free while in beta.

## Keyboard-Driven Issue Triage

Published: 2026-03-04 · URL: https://beadbox.app/en/blog/keyboard-driven-triage-visual-issue-tracking

You spend your day in a terminal. You navigate files with vim motions, switch tmux panes with prefix keys, and search your shell history with Ctrl-R. Then you need to triage your backlog, and every project management tool on the planet wants you to reach for the mouse.

Jira needs a click to open an issue. Another click to close the panel. Another to switch projects. Linear is faster, but still fundamentally mouse-first: you point, you click, you scroll. GitHub Issues requires a full page load for every issue you open. These tools were designed for product managers working in browsers, not for developers working in terminals.

The friction is small per interaction and enormous across a day. If you triage 30 issues in a morning, that's 30 reach-for-mouse, click, read, click-close cycles. Your hands leave the keyboard 60 times for what should be a sequential scan through a list.

We built [Beadbox](https://beadbox.app) for developers who think this is absurd.

## The full keyboard triage workflow

Beadbox is a native desktop app (built on [Tauri](https://tauri.app/), not Electron) that renders a real-time visual dashboard for the [beads](https://github.com/steveyegge/beads) issue tracker. It shows you epic trees, dependency badges, status filters, and progress bars. And you can navigate all of it without touching the mouse.

Here's what a triage session looks like:

1. **Open Beadbox.** Your most recent workspace loads automatically. Issues appear in a table with status badges, priority indicators, and assignee columns.

2. **Press `j` to move down the list.** Press `k` to move up. These are vim-style motions, the same muscle memory you already have. The selection highlight tracks your position.

3. **Press `Enter` to open the detail panel.** The selected issue expands into a side panel showing the full description, comments, dependencies, and metadata. You read it without losing your place in the list.

4. **Press `Escape` to close the panel.** You're back in the list, cursor exactly where you left it. Press `j` to move to the next issue.

5. **Press `/` to search.** A search bar appears. Type a keyword or issue ID, and the list filters instantly. Press `Escape` to clear the search and return to the full list.

6. **Use arrow keys on epic trees.** When you're viewing an epic with nested children, left and right arrows collapse and expand tree nodes. `h` and `l` also work (vim-style horizontal navigation). You scan through a 15-issue epic without clicking a single disclosure triangle.

That's it. `j/k` to move, `Enter` to open, `Escape` to close, `/` to search, arrow keys to expand trees. Five keys cover 90% of triage navigation.

If you spot an issue that needs a status change or priority bump during triage, you drop to the terminal:

```bash

bd update bb-f8o --status in_progress --priority 1

```

Beadbox picks up the change within milliseconds (via filesystem watch and WebSocket) and re-renders. You see the updated status badge without refreshing or clicking anything. Then you press `j` and keep moving.

## Why reads and writes are split on purpose

This is the part where most GUI tools get it wrong. They try to handle everything: reading issues, editing fields, changing statuses, managing dependencies. The result is forms. Lots of forms. Dropdown menus for status. Text inputs for descriptions. Modal dialogs for dependency management. Every one of those interactions requires clicking.

Beadbox took a different approach. It's a read-heavy interface. The CLI handles writes.

The [beads CLI](https://github.com/steveyegge/beads) (`bd`) is already the source of truth for your issue data. Agents use it. Scripts use it. Your automation uses it. Building a second write path through a GUI creates a synchronization problem and doubles the surface area for bugs.

Instead, Beadbox optimizes ruthlessly for comprehension and navigation. It answers the questions that terminals are worst at: What does the full epic tree look like? Which issues are blocked, and on what? How far along is this feature? What changed in the last hour? These are visual questions. Flat text output from `bd list` can technically answer them, but a collapsible tree with progress bars answers them in a glance.

The keyboard shortcuts exist to make that glance fast. You scan, you read, you understand. When you need to act, you type a `bd` command. Two tools, each doing what it's best at.

## Switching workspaces without losing context

If you work on multiple projects, each with its own beads database, workspace switching becomes a daily friction point. In most project management tools, switching projects means navigating to a different URL, logging into a different workspace, or opening a new browser tab. Your filters reset. Your scroll position resets. You lose the mental context you had in the previous project.

Beadbox handles this differently. A dropdown in the header lists every detected workspace. Click it (or navigate to it with keyboard), select a different project, and the entire view reloads from that project's database. The critical detail: filters and scroll position persist per workspace. When you switch back, everything is exactly where you left it.

The detection is automatic. Beadbox scans `~/.beads/registry.json` for registered workspaces and discovers directories containing `.beads/` databases. Add a new project, run `bd init` in it, and the next time you open Beadbox it appears in the dropdown. No import, no configuration screen, no "add workspace" wizard.

For developers who maintain multiple services or manage agents across several repositories, this turns Beadbox into a single pane across all active work. The alternative is multiple terminal windows, each running `bd list` against a different `--db` path, and keeping track of which window points at which project in your head.

## How the alternatives compare

Every major project management tool requires mouse interaction for basic navigation:

**Jira** has keyboard shortcuts (`j`/`k` exist), but they navigate between issues in a list view that still requires clicking to open details, clicking to switch projects, and clicking through deeply nested menus to manage epics. The shortcuts feel bolted on rather than foundational.

**Linear** is the closest to keyboard-friendly among SaaS tools. It has `Cmd+K` for command palette and some navigation shortcuts. But workspace switching still means clicking through a sidebar menu, and the command palette is a search-first interaction model, not a scan-first one. You need to know what you're looking for. Triage is about scanning what you don't know yet.

**GitHub Issues** has no meaningful keyboard navigation for triage. You click an issue to open it (full page load), click the back button to return, and repeat. Switching between repositories is a URL change. There's no keyboard-driven scan of a backlog.

**Beadbox** was designed around keyboard triage from the start. The shortcuts aren't an afterthought layered on top of a mouse-first UI. The entire navigation model assumes your hands stay on the keyboard. The mouse works too (everything is clickable), but it's the fallback, not the primary interaction.

## What you're actually comparing

The real difference isn't "which tool has more keyboard shortcuts." It's the interaction model.

Mouse-first tools optimize for discoverability. Every action has a visible button. That's great for onboarding and for non-technical users who need to find features. It's terrible for speed once you know what you're doing.

Keyboard-first tools optimize for throughput. Once you learn `j/k/Enter/Escape`, you triage at the speed of reading, not at the speed of pointing. The tradeoff is a steeper initial learning curve (you need to know the shortcuts exist). For developers who already use vim motions in their editor and terminal, that curve is essentially flat.

Beadbox also makes a tradeoff that SaaS tools can't: it only works with [beads](https://github.com/steveyegge/beads). You don't get Jira's integrations, Linear's cycles, or GitHub's pull request links. You get a visual dashboard for a Git-native issue tracker that stores everything locally, runs offline, and lets AI agents read and write issues through Unix pipes. If that's your stack, the keyboard workflow is unmatched. If you need Slack notifications when issues close, this isn't the right tool today.

## Get started

Install Beadbox with Homebrew:

```bash

brew tap beadbox/cask && brew install --cask beadbox

```

If you already use beads, Beadbox detects your `.beads/` workspaces automatically. Open the app and start pressing `j`.

Runs on macOS, Linux, and Windows. Free while in beta.

## Visualizing Dependencies Between AI Agents in Real Time

Published: 2026-03-01 · URL: https://beadbox.app/en/blog/visualizing-dependencies-between-ai-agents-real-time

You have five AI coding agents working a feature epic. Agent 1 is building the API layer. Agent 2 needs that API to wire up the frontend. Agent 3 is writing integration tests that depend on both. Agents 4 and 5 are handling migrations and docs, each blocked on different pieces.

This works for about twenty minutes. Then Agent 2 stalls because Agent 1 hit an unexpected schema problem. Agent 3 is now blocked on Agent 2, which is blocked on Agent 1. Agents 4 and 5 keep churning, but their work can't merge until the chain resolves. You don't find out until you wonder why nothing has shipped in an hour and start running `bd blocked` across every issue.

The dependency information exists. It lives in your issue tracker. But when you manage it through a CLI, you're reconstructing the graph in your head from flat text output. That reconstruction fails at exactly the moment it matters most: when the graph is complex and things are breaking.

## How beads tracks dependencies

[beads](https://github.com/steveyegge/beads) is a git-backed issue tracker built for AI agent coordination. It stores everything in a local Dolt database inside your repo's `.beads/` directory. No cloud service, no accounts, no sync conflicts.

Agents declare dependencies with a single command:

```bash

bd dep add ISSUE-42 ISSUE-37

```

This records that ISSUE-42 depends on ISSUE-37. ISSUE-42 cannot proceed until ISSUE-37 closes. The inverse query is just as simple:

```bash

bd blocked

```

That returns every issue in the workspace currently blocked by an unresolved dependency. And for a specific issue:

```bash

bd dep list ISSUE-42

```

This shows what ISSUE-42 depends on and what depends on ISSUE-42.

The data model is clean. The problem isn't recording dependencies. The problem is seeing them. When you have 30 active issues across five agents, running `bd blocked` gives you a list. A list doesn't show you that ISSUE-12 is a bottleneck blocking seven downstream tasks across three agents. A list doesn't show you that Agent 3 created a circular dependency chain between ISSUE-18 and ISSUE-22. You need a spatial view of the graph, not a sequential one.

## What Beadbox shows you

[Beadbox](https://beadbox.app) is a native desktop app that wraps the beads CLI with a visual interface. It reads from the same `.beads/` database your agents write to, and it updates in real time as they work.

In the epic tree view, every issue that has unresolved dependencies shows a blocked badge inline. You see the full tree structure of your epic, with blocked issues marked at a glance. No command to run, no output to parse.

The dependency chain is visible spatially. If ISSUE-42 depends on ISSUE-37, and ISSUE-37 depends on ISSUE-15, and ISSUE-15 is assigned to Agent 1 which is stuck, you can trace that chain by scanning the tree. You see the shape of the bottleneck without reconstructing it from separate CLI queries.

The real-time piece matters. When Agent 1 finally closes ISSUE-15, the Beadbox UI reflects it within a second. The blocked badge on ISSUE-37 drops. If ISSUE-37 was the only thing blocking ISSUE-42, that badge drops too. You watch the dependency chain collapse as work completes, without refreshing or re-querying.

Under the hood, this works through a straightforward pipeline: a WebSocket server watches the `.beads/` directory with `fs.watch()`. When any agent writes to the database (closing an issue, adding a dependency, updating status), the filesystem event triggers a broadcast to all connected clients. The React UI re-renders with fresh data. Sub-second latency from agent action to visual update.

## A concrete scenario: spotting a bottleneck

Five agents are working a feature epic with 24 issues. You open Beadbox and look at the epic tree. Twelve issues are in progress. Six show blocked badges.

That's already information you didn't have. `bd list` would show you 12 in-progress issues, but you'd need to run `bd blocked` separately and cross-reference to understand which in-progress issues are actually stalled.

You scan the blocked badges and notice something: four of the six blocked issues all depend on ISSUE-19, a database schema migration assigned to Agent 4. Agent 4 is still working it, but ISSUE-19 has become a single-point bottleneck. Four agents are effectively idle, waiting on one task.

Without the visual view, you might not catch this for another hour. With it, you can intervene immediately. Maybe you reassign ISSUE-19 to a faster agent. Maybe you split it into smaller pieces that can unblock some dependents early. Maybe you realize two of those four dependencies were over-declared and can be removed with `bd dep remove`.

The point isn't that the information was unavailable before. It was always in the database. The point is that the visual representation surfaces patterns that flat text obscures.

## Common dependency anti-patterns

Running multiple AI agents on one repo produces a few recurring dependency problems. All of them are easier to catch visually than through CLI queries.

**Over-declaration.** Agents tend to be conservative. When in doubt, they declare a dependency. The result is a dependency graph that's denser than it needs to be, with issues blocked on work they don't actually need. In Beadbox, you spot this when an issue shows a blocked badge but the blocking issue is in a completely unrelated part of the codebase. A quick `bd dep remove` cleans it up.

**Circular chains.** Agent A declares a dependency on Agent B's work. Agent B, working independently, declares a dependency on Agent A's work. Now both are blocked on each other and neither can proceed. The beads CLI catches obvious circular dependencies at creation time, but indirect cycles through three or more issues are harder to detect. In the epic tree, you notice these as clusters of blocked badges that never resolve, even as other work completes around them.

**Single-point bottlenecks.** One issue accumulates five, six, seven downstream dependents. This happens naturally when agents working in parallel all need the same foundational piece. The scenario above illustrates the pattern. In a list view, you see seven blocked issues. In a tree view, you see seven arrows pointing at the same node. The bottleneck is obvious.

## Getting started

Beadbox runs on macOS, Linux, and Windows. Install it with Homebrew:

```bash

brew tap beadbox/cask && brew install --cask beadbox

```

Point it at any repository with a `.beads/` directory. If you're already running beads with your agent fleet, Beadbox picks up the existing database and starts rendering immediately. No import step, no configuration, no account creation.

Your agents keep using the CLI. They run `bd dep add`, `bd update`, `bd close` as usual. Beadbox watches the database and reflects every change in real time. You get the visual layer without changing any agent workflows.

Beadbox is free while in beta.

If you're coordinating multiple AI agents on a single codebase, the dependency graph is the thing that will break your workflow first. You can manage it blind through the CLI, or you can see it. Seeing it is faster.

## Claude Code Multi-Agent Workflow Guide: From 1 to N Agents

Published: 2026-02-28 · URL: https://beadbox.app/en/blog/claude-code-multi-agent-workflow-guide

You've seen the screenshots. Five, ten, fifteen Claude Code agents running in tmux, each one working on a different piece of the same codebase. It looks productive. It looks exciting. And if you've tried to replicate it, you know it looks a lot easier than it is.

Running one Claude Code agent is straightforward. You give it a task, it writes code, you review. Running two is manageable but introduces a new problem: they might step on each other's changes. Running five requires a system. Running ten without a system is chaos with a monthly bill.

This guide is about that system. Not the theory of multi-agent architectures. The actual, practical workflow for running multiple Claude Code agents on a real codebase without everything falling apart.

## Why One Agent Isn't Enough

A single Claude Code agent can handle a surprising amount of work. But it's sequential. While it's implementing a backend endpoint, your frontend sits idle. While it's writing tests, your documentation falls behind. While it's debugging a build failure, three new features wait in the queue.

The math changes when you realize that most software work is parallelizable. A frontend component and a backend API endpoint don't share files. A test suite and a documentation update touch different directories. An architecture review and a bug fix operate on different timescales entirely.

The bottleneck in single-agent development isn't the agent's speed. It's your pipeline depth. One agent means one thing in progress at a time. Multiple agents mean multiple things in progress simultaneously, and that changes what a single developer can ship in a day.

## Work Splitting Strategies

Before you open a second tmux pane, you need to decide how to divide work. Three patterns hold up in practice.

### Split by Component

The simplest approach. Agent A owns `components/`, Agent B owns `server/`, Agent C owns `lib/`. Each agent works in its own territory and never touches files outside it.

This works well when your codebase has clear architectural boundaries. A Next.js app with distinct frontend components, backend actions, and shared libraries splits naturally along those lines.

The limitation: cross-cutting work. A feature that requires changes to the UI, the API, and the database layer doesn't fit neatly into one agent's territory. You handle this by breaking the feature into component-scoped subtasks and sequencing them.

### Split by Role

Instead of dividing by code location, divide by function. One agent writes code. Another writes tests. A third handles documentation. A fourth does code review.

This mirrors how human teams work and produces higher quality output because the test-writing agent doesn't know (or care) how easy the code was to write. It tests against the spec, not against the author's assumptions.

The tradeoff is more coordination overhead. The test agent needs the implementation agent to finish first. The documentation agent needs both. You're managing a pipeline, not just parallel workers.

### Split by Lifecycle Stage

A more sophisticated version of role splitting. One agent brainstorms and plans. Another implements. A third verifies. The work flows through stages, and each agent is specialized for its stage.

This is the pattern we use at [Beadbox](https://beadbox.app). Our architect agent designs, our engineering agents implement, our QA agents verify independently. The same task flows through multiple specialists, and each one adds a layer of quality that generalist agents miss. I wrote about the full setup in [I Ship Software with 13 AI Agents](/blog/coding-with-13-agents).

The right strategy depends on your project. Small projects with clear file boundaries do well with component splitting. Larger projects where quality matters benefit from role or lifecycle splitting. Most teams end up with a hybrid.

## The CLAUDE.md Identity Pattern

Here's where theory meets implementation. Each Claude Code agent gets its own `CLAUDE.md` file, and this file is the single most important piece of the multi-agent system.

A `CLAUDE.md` defines four things:

1. **What the agent is.** Its role, specialty, and domain.

2. **What it owns.** The files, directories, or responsibilities it controls.

3. **What it must not touch.** The explicit boundaries that prevent conflicts.

4. **How it communicates.** The protocols for reporting work and coordinating with other agents.

Here's a real example. Two Claude Code agents with complementary scopes:

```markdown

# CLAUDE.md for Agent: frontend-eng

## Identity

Frontend engineer. You implement UI components, pages, and client-side

logic. You own everything under components/, app/, and hooks/.

## File Ownership

- components/** (you own these)

- app/** (you own these)

- hooks/** (you own these)

- lib/utils.ts (shared, read-only for you)

- server/** (DO NOT MODIFY — owned by backend-eng)

## Communication

When you need a backend change, create a task describing what API

you need. Do not implement it yourself.

When done with a task, comment: "DONE: . Commit: "

```

```markdown

# CLAUDE.md for Agent: backend-eng

## Identity

Backend engineer. You implement server actions, API routes, and

data layer logic. You own everything under server/, actions/, and lib/.

## File Ownership

- server/** (you own these)

- actions/** (you own these)

- lib/** (you own these, except utils.ts is shared)

- components/** (DO NOT MODIFY — owned by frontend-eng)

- app/** (DO NOT MODIFY — owned by frontend-eng)

## Communication

When you change a data type in lib/types.ts, notify frontend-eng

by commenting on the relevant task.

When done with a task, comment: "DONE: . Commit: "

```

Notice the explicit "DO NOT MODIFY" lines. Without these, agents drift. They see an opportunity to "help" by fixing a typo in a file they don't own, and suddenly you have merge conflicts. Or worse, they silently refactor code that another agent was depending on.

The identity section isn't decoration. Claude Code reads `CLAUDE.md` at the start of every session and uses it to scope its behavior. An agent told it's a "frontend engineer" will naturally resist making backend changes. An agent told it owns specific directories will ask before modifying files outside those directories.

## Avoiding Merge Conflicts

File-level ownership, as shown in the CLAUDE.md examples above, is the first line of defense. But it's not the only one.

**Commit and push frequently.** An agent that works for 45 minutes without committing is building up a merge conflict time bomb. Instruct agents (in their CLAUDE.md) to commit after completing each logical unit of work.

**Pull before starting new work.** Each agent should `git pull --rebase` before beginning a new task. This is trivially easy to enforce by adding it to the agent's startup protocol in CLAUDE.md.

**Use feature flags for cross-cutting work.** When two agents need to modify the same file, the safer approach is often to have one agent create the interface or flag, commit and push, then have the second agent pull and build on top of it. Sequential beats parallel when the alternative is a merge nightmare.

**Separate branches for risky work.** If an agent is doing something experimental, give it its own branch. This is especially useful for architecture spikes or refactoring work that might not land.

In practice, the combination of file ownership rules and frequent commits eliminates 90% of merge conflicts. The remaining 10% happen in shared files like `types.ts` or `package.json`, and they're usually trivial to resolve.

## Agent-to-Agent Communication

Claude Code agents can't talk to each other directly. There's no shared memory, no message bus, no real-time channel between them. This is actually a good thing. Direct communication between agents creates coupling, race conditions, and debugging nightmares.

Instead, communication happens through artifacts. Three patterns work:

### Task Comments

The most reliable pattern. Agent A finishes work and comments on a shared task: "DONE: implemented the /api/users endpoint. Returns JSON. Schema is in lib/types.ts." Agent B reads the task comment and knows exactly what's available.

### Status Updates

Each task has a status: open, in_progress, done, blocked. When Agent A marks a prerequisite task as done, Agent B (or you, or a coordinator) knows the dependent work can start.

### File Changes

The simplest form. Agent A writes a TypeScript interface to `lib/types.ts` and commits. Agent B pulls and sees the new types. No explicit communication needed because the code itself is the message.

What does NOT work: trying to build a real-time message-passing system between agents. If you need Agent A to wait for Agent B's output, model that as a dependency between tasks, not as a synchronous call.

## The Dispatch Loop

Someone needs to run the show. In a multi-agent Claude Code setup, there are two options: you do it manually, or you designate a coordinator agent.

### Manual Dispatch

You maintain a task list. You assign tasks to agents. You check progress. You handle blockers. This works up to about five agents before the coordination overhead starts eating into the productivity gains.

A typical manual dispatch cycle looks like this:

1. **Morning:** Review what's in progress, what's blocked, what's ready for work

2. **Assign:** Send each agent its next task with context

3. **Monitor:** Every 10-15 minutes, check agent output for signs of being stuck

4. **Unblock:** When an agent hits a problem, intervene or reassign

5. **Close out:** At end of day, review what shipped and queue tomorrow's work

In tmux, this looks like cycling through panes, reading recent output, and deciding what each agent needs next. Tools like `gp` (peek at an agent's recent output without interrupting it) help, but you're still the bottleneck.

### Coordinator Agent

Dedicate one Claude Code agent to dispatching work to the others. This agent doesn't write code. It reads the task backlog, assigns work to available agents, checks on progress, and handles the dispatch loop programmatically.

This is the pattern we use. Our "super" agent runs a patrol loop: every few minutes, it peeks at each active agent, checks task statuses, identifies blockers, and dispatches new work when an agent goes idle. The human (me) makes the priority calls and resolves ambiguous situations. Super handles the logistics.

A coordinator agent needs its own CLAUDE.md:

```markdown

# CLAUDE.md for Agent: super

## Identity

Dispatch coordinator. You assign work to agents, monitor progress,

and ensure the pipeline keeps moving. You do NOT write code.

## Responsibilities

- Maintain awareness of all active tasks and their statuses

- Assign ready tasks to idle agents

- Monitor agent progress every 5-10 minutes

- Escalate blockers to the human when agents can't self-resolve

- Verify agents follow the protocol: plan before code, test before done

## Communication

- To assign work: message the agent with task ID and priority

- To check progress: peek at agent's recent output

- To escalate: message the human with context and options

```

The coordinator pattern scales much better than manual dispatch. At 10+ agents, manual coordination is a full-time job. A coordinator agent handles the routine logistics and only escalates the decisions that require human judgment.

## Tmux Layout for Multi-Agent Work

The physical layout matters more than you'd think. Here's a tmux configuration that works for running multiple Claude Code agents:

```bash

# Create a new tmux session

tmux new-session -s agents -n super

# Split into panes for each agent

tmux split-window -h -t agents:super

tmux split-window -v -t agents:super.1

# Or create named windows (easier to manage at scale)

tmux new-window -t agents -n eng1

tmux new-window -t agents -n eng2

tmux new-window -t agents -n qa1

tmux new-window -t agents -n frontend

tmux new-window -t agents -n backend

```

Named windows beat split panes once you pass four agents. You can't read five panes on a single screen, but you can quickly switch between named windows. The naming convention matters too. `eng1`, `eng2`, `qa1` are instantly scannable. `agent-1`, `agent-2`, `agent-3` tell you nothing.

Start each agent in its own working directory with its own CLAUDE.md:

```bash

# In the eng1 window

cd ~/project

claude --claude-md ./agents/eng1/CLAUDE.md

# In the qa1 window

cd ~/project

claude --claude-md ./agents/qa1/CLAUDE.md

```

One practical tip: keep a "dashboard" window that's just a shell. Use it to run `git log --oneline -10`, check task status, or peek at agents without interrupting their work. This becomes your command center.

## When Things Go Wrong

Multi-agent workflows fail in predictable ways. Knowing the failure modes saves you from learning them the hard way.

**Two agents edit the same file.** Usually because the file ownership in CLAUDE.md wasn't specific enough. `lib/utils.ts` is a classic conflict magnet. Solution: either assign shared utility files to one specific agent, or make them read-only for everyone and route changes through a single owner.

**An agent goes silent.** It hit a rate limit, an error loop, or just got stuck in a deep chain of reasoning. Check the output. If it's retrying the same failing command, kill the session and restart with clearer instructions. Periodic health checks (every 10-15 minutes) catch this before you lose an hour.

**Context windows fill up.** Long-running agents accumulate context and start performing worse. Each agent's CLAUDE.md should include a protocol for this: "If you've been working for more than 90 minutes, save your state and request a fresh session." In practice, this means having the agent commit its work, note where it left off, and starting a new Claude Code session that picks up from that commit.

**Work drifts from the spec.** Agent builds something that technically works but doesn't match what was asked for. The fix is the plan-before-code pattern: before writing any code, the agent comments its implementation plan. You review the plan in 60 seconds and catch misunderstandings before they become 500-line diffs.

**The pipeline stalls.** Agent B is waiting on Agent A, but Agent A is waiting on a decision from you. Meanwhile Agent C finished its work 30 minutes ago and has been idle. This is a coordination failure, not a technical one. The coordinator agent (or you) needs to keep the pipeline moving by monitoring blockers and reassigning idle agents.

## How We Solved This with Beads

Everything above works with sticky notes and good intentions. But around five agents, the informal approach starts cracking. You forget what Agent C was working on. You lose track of which tasks are blocked. You can't remember if the API endpoint Agent B needs was finished or just started.

This is the problem that [beads](https://github.com/steveyegge/beads) solves. Beads is an open-source, local-first issue tracker. Every task is a "bead" with a unique ID, a status, a description, acceptance criteria, dependencies, and a comment thread. All of it accessible through a CLI called `bd`, which means your Claude Code agents can read and write to it without leaving the terminal.

Here's how the dispatch loop looks with beads:

```bash

# See what's ready for work

bd list --status open

# Assign a task to an agent

bd update bb-a1b2 --claim --actor eng1

# Agent reads its assignment

bd show bb-a1b2

# Agent comments its plan before coding

bd comments add bb-a1b2 --author eng1 "PLAN:

1. Add endpoint at /api/users

2. Define UserResponse type in lib/types.ts

3. Write integration test

Files: server/api/users.ts (new), lib/types.ts (modify)

Test: curl localhost:3000/api/users returns 200 with JSON array"

# Agent finishes and comments what it did

bd comments add bb-a1b2 --author eng1 "DONE: /api/users endpoint live.

Returns paginated JSON. Added UserResponse type.

Verification:

1. curl http://localhost:3000/api/users → 200, JSON array

2. curl http://localhost:3000/api/users?page=2 → 200, second page

3. pnpm test → all passing

Commit: 8f3c2a1"

# Agent marks the task done

bd update bb-a1b2 --status closed

```

Every agent follows this protocol: claim, plan, implement, comment DONE, update status. The comment thread on each bead becomes a complete audit trail of what happened, why, and how to verify it.

Dependencies prevent conflicting work:

```bash

# Create a task that depends on another

bd create --title "Build user list component" \

--deps bb-a1b2 \

--description "Frontend component that calls /api/users. Blocked until API is live."

```

The dependent task stays blocked until `bb-a1b2` is done. No agent will pick it up prematurely. No one wastes time building a frontend for an API that doesn't exist yet.

The `bd list` command gives you a snapshot of the entire pipeline:

```bash

bd list --status in_progress

# Shows what every agent is actively working on

bd blocked

# Shows tasks waiting on unfinished dependencies

bd list --status open --priority p1

# Shows the highest-priority work that's ready to start

```

This replaces the mental model you were keeping in your head. The state of every task, every agent's current work, every dependency chain, all queryable from the command line.

## Scaling Visibility

The CLI works. But at scale, there's a limit to how much you can absorb by running `bd list` in a terminal. When you have eight agents working across three epics with seventeen open tasks and a dozen dependencies, you need to see the shape of the work, not just a list of it.

This is the gap we built [Beadbox](https://beadbox.app) to fill. Beadbox is a real-time dashboard that sits on top of beads and shows you:

- **Epic trees** with progress bars, so you see how each feature is progressing across all its subtasks

- **Dependency graphs** that surface blocked work before it stalls the pipeline

- **Agent activity** showing which agent is working on what, with their plan and done comments visible in context

- **Real-time updates** because the dashboard watches your beads database and refreshes as agents update task statuses

Beadbox doesn't replace the CLI. Your agents still read and write to beads through `bd`. Beadbox gives you the big picture so you can make the judgment calls: which epic is falling behind, which agent needs help, where the bottleneck is forming.

It's free during the beta. If you're building workflows like this, star [Beadbox](https://github.com/beadbox/beadbox) on GitHub.

## Getting Started

You don't need thirteen agents to benefit from this. Here's the minimum viable setup:

1. **Two Claude Code agents** in separate tmux windows, each with its own CLAUDE.md defining file ownership boundaries.

2. **A task list** (even a text file works at this scale) so both agents know what they're working on and what's up next.

3. **A commit protocol:** both agents commit frequently and pull before starting new work.

Once that feels natural, add a third agent for testing or documentation. Then consider a coordinator agent. Then adopt beads for structured task tracking. Scale the system as the coordination pain increases, not before.

The hard part isn't the tooling. It's the shift in thinking: from "I'm using an AI assistant" to "I'm running a team." The CLAUDE.md files, the dispatch protocols, the ownership boundaries: these are management practices, not configuration files. You're building an organization, even if the team members run on API calls.

Start with two agents and clear boundaries. Everything else follows from there.

## Visual Epic Progress Tracking for Developer Teams

Published: 2026-02-27 · URL: https://beadbox.app/en/blog/visual-epic-progress-tracking-developer-teams

You create an epic with 15 subtasks. You assign them across a handful of agents or teammates. Two days later, someone asks: "How far along is the auth rewrite?"

You run `bd show bb-r4f`. That gives you the epic itself. Title, description, priority. It doesn't tell you how many children are complete. So you run `bd list --parent bb-r4f`. You get a flat list of IDs and titles. To see the status of each one, you pipe through `jq` or run `bd show` on each child individually. Some of those children have their own subtasks. Now you're three levels deep, reconstructing a tree in your head from terminal output.

This works when an epic has three children. It falls apart at ten. And if you're coordinating AI agents that create subtasks, file blockers, and close issues in rapid succession, the CLI output goes stale between the time you run the command and the time you finish reading it.

The problem isn't beads. The [beads CLI](https://github.com/steveyegge/beads) is excellent at structured, scriptable issue management. The problem is that hierarchical progress is a visual concept, and terminals render text in rows.

## What an epic tree looks like in Beadbox

Open [Beadbox](https://beadbox.app), click an epic, and you see its children in a collapsible tree. Each child shows a status badge (open, in_progress, ready_for_qa, closed), a priority indicator, and the assignee. The epic itself displays a progress bar: "9 of 14 complete (64%)." That number updates as children close.

Expand a child that's itself an epic and you see its subtasks nested underneath. The parent's progress aggregates from all descendants, not just direct children. A three-level epic with 40 total issues across engineering, QA, and documentation shows you the real completion percentage at the top, accounting for every leaf node in the tree.

Blocked issues get a distinct visual treatment. If `bb-m3q` depends on `bb-k7p` and `bb-k7p` is still open, the blocked badge sits next to `bb-m3q`'s status. You don't need to run `bd dep list` to discover the bottleneck. It's visible in the tree, at the level where it matters.

Compare this to the CLI workflow. To answer "what's blocking progress on the auth epic," you'd run:

```bash

bd list --parent bb-r4f --status=open --json | \

jq -r '.[].id' | \

xargs -I{} bd show {} --json 2>/dev/null | \

jq -r 'select(.blocked_by | length > 0) | "\(.id) blocked by \(.blocked_by | join(", "))"'

```

That's a perfectly valid pipeline. It returns the right answer. But you have to write it, remember the flags, and re-run it every time you want an update. In Beadbox, the same information is always visible in the tree. No query required.

## Real-time updates: the tree changes while you watch

This is where the visual model earns its keep. When an agent runs `bd update bb-k7p --status=closed` in a terminal, Beadbox picks up the filesystem change within milliseconds. The WebSocket server detects the write to the `.beads/` directory, broadcasts the change, and the React UI re-renders.

In the epic tree, that looks like this: `bb-k7p` flips from an orange "in_progress" badge to a green "closed" badge. The progress bar on the parent epic ticks from 64% to 71%. And `bb-m3q`, which was blocked on `bb-k7p`, drops its blocked indicator and shows as available work.

All of that happens without you running a command or clicking a refresh button. If you're supervising a fleet of agents working through a release epic, you watch the tree fill in as tasks complete. Bottlenecks surface the moment they form because blocked badges appear in real time. Stalled subtrees (clusters of issues that stop changing status) become visually obvious after a few minutes of inactivity against a backdrop of steady progress elsewhere.

The underlying mechanism is straightforward. Beadbox runs a WebSocket server that calls `fs.watch()` on your `.beads/` directory. Every database write triggers a broadcast. The client-side hook receives the signal and re-fetches the relevant server action. No polling interval, no manual refresh. The latency from CLI command to UI update is typically under one second.

## Keyboard-first navigation

Beadbox is a desktop app for developers, and it behaves like one. `j` and `k` move through the issue list (vim-style). `Enter` opens the selected issue in the detail panel. `/` focuses the search bar. `Escape` closes whatever you have open. Arrow keys expand and collapse epic tree nodes.

You can triage an entire backlog without touching the mouse. Move down the list with `j`, open an issue to read its description, press `Escape` to close, move to the next. If you spot something that needs a status change, you still drop to the terminal for mutations (`bd update`). Beadbox is a read-heavy interface by design. The CLI handles writes. The GUI handles comprehension.

This split is intentional. A GUI that tries to replace the CLI for writes ends up building forms for every possible flag combination. A GUI that focuses on reading and navigation can optimize for the thing terminals are worst at: showing hierarchical, cross-referenced data at a glance.

## Multiple projects, one window

If you work across more than one codebase, each with its own `.beads/` database, Beadbox's workspace switcher handles that. A dropdown in the header lists every detected workspace. Click one (or find the workspace with `/` search), and the entire view reloads from that project's database. Filters and scroll position persist per workspace, so switching back doesn't lose your place.

The detection is automatic. Beadbox scans for registered workspaces in the bd configuration and for directories containing `.beads/` databases. Add a new project, initialize beads in it, and the next time you open Beadbox it appears in the dropdown. No import, no configuration screen.

For developers who maintain several services, or for teams where each agent works in a separate repository, this turns Beadbox into a single pane across all active projects. The alternative is multiple terminal windows, each running `bd list` against a different `--db` path.

## What this replaces

Beadbox doesn't replace the CLI. If you script your workflows, pipe `bd list` through `jq`, or have agents that create and close issues programmatically, that all continues to work unchanged. Beadbox reads the same database your scripts write to.

What it replaces is the mental overhead of reconstructing project state from flat text output. The questions that Beadbox answers at a glance, and that the CLI answers only through composed queries:

- How far along is this epic, really?

- What's blocked right now, and on what?

- Which subtasks haven't been touched in hours?

- Are agents making progress, or have they stalled?

These are visual questions. They deserve visual answers.

## Getting started

Beadbox is free during the beta. Install with Homebrew:

```bash

brew tap beadbox/cask && brew install --cask beadbox

```

If you already use [beads](https://github.com/steveyegge/beads), Beadbox detects your `.beads/` workspaces on launch. No import, no account. Open the app, expand an epic, and see where your project actually stands.

Runs on macOS, Linux, and Windows.

## Why Project Management Tools Don't Work for AI Agents

Published: 2026-02-27 · URL: https://beadbox.app/en/blog/why-project-management-tools-dont-work-for-ai-agents

You're running multiple AI coding agents on the same codebase. Maybe three, maybe thirteen. They need to track their own work: create issues, update statuses, check dependencies, report progress. Dozens of writes per minute across the fleet.

This is agentic engineering: humans coordinating fleets of AI agents to ship software. The workflow is new, but the first thing everyone does is reach for the tool they already know. Jira. Linear. GitHub Issues. Notion. Whatever your team uses for project management.

It doesn't work. And the mismatch isn't superficial. It's architectural.

## Latency kills throughput

A Jira API call takes 200-800ms. A Linear API call is faster but still 100-300ms. Creating a single issue, reading its dependencies, updating its status: that's three round-trips through HTTPS, DNS resolution, TLS handshake, and JSON serialization. Call it 500ms on a good day.

A local CLI write to a SQLite database takes under 50ms. Often under 10ms.

That sounds like a rounding error until you multiply it by the number of operations. An agent working through a task might create 2-3 sub-issues, update the parent status, check for blockers, and comment its progress. Six operations. At 500ms each, that's 3 seconds of pure waiting. At 10ms each, it's 60 milliseconds. The agent that could finish a task cycle in 30 seconds now spends 10% of its time waiting on HTTP instead of writing code.

Scale that to 13 agents and the overhead is measured in minutes per hour.

## Auth infrastructure is fragile glue

Every agent needs an API token. Tokens expire. Rate limits exist. One agent's burst of 20 rapid-fire updates triggers a 429 Too Many Requests. Now it's stuck in a retry loop with exponential backoff instead of doing its job.

You've added an entire failure mode that has nothing to do with the work itself. Token rotation, secret management, rate limit budgeting across agents. That's operational overhead for a capability that should be trivial: writing a record to a local database.

When the issue tracker is a file on disk, there's nothing to authenticate against. If the agent can read the filesystem, it can read and write issues. One less thing to break.

## The data model assumes humans

Open Jira. You see sprints. Story points. Assignees with profile photos and email addresses. Workflows with states like "In Review" and "Ready for Grooming." The entire data model was designed for a team of humans doing standups, sprint planning, and retrospectives.

Agents don't do standups. They don't estimate in story points. They don't need a workflow with seven states and four approval gates.

What agents need is a dependency graph. This task is blocked by that task. This epic has 12 children and 7 are complete. This agent claimed this issue 45 seconds ago and hasn't reported back. The fundamental data structure is a tree of tasks with blocking relationships, not a board of cards moving through columns.

SaaS tools bolt on "automation" features, but the core model underneath is still a Kanban board for humans. You can write a Jira plugin that lets agents create issues. You can't change the fact that Jira thinks your agent is a person on a sprint team.

## Cloud dependency is a single point of failure

Your agents run locally. They read local files, write local code, and commit to local git repos. They can work offline, on a plane, or on a network with 2000ms latency. They don't care.

But if your issue tracker is a SaaS product, every agent operation requires internet access. Linear goes down for 10 minutes? Your entire fleet stalls. Your home internet hiccups for 30 seconds? Every agent retries in a loop. The issue tracker, the thing that's supposed to coordinate work, becomes the single point of failure for the whole system.

Local-first means the issue tracker is as reliable as the filesystem. It's always available, always fast, always under your control.

## The write volume is orders of magnitude wrong

SaaS project management tools are designed for a team of 5-10 humans making a handful of updates per day. Maybe 50-100 writes across the whole team.

13 agents updating issues every few minutes produce hundreds of API calls per hour from a single project. That's not a marginal increase in usage. It's a different usage pattern entirely. Rate limits that seem generous for human teams become hard walls for agent fleets.

And it's not just volume. It's concurrency. Three agents updating the same epic's children simultaneously. Race conditions on status fields. Optimistic locking failures on comment threads. These are problems SaaS tools never had to solve because humans don't update the same issue from three terminals at the same instant.

## Collaboration means giving up your data

To share a Jira project with a teammate, both of you need Jira accounts. The data lives on Atlassian's servers. You're paying per seat, per month, for the privilege of accessing your own project data through their API.

Want to move to a different tool? Export what you can as CSV and abandon the rest. Comments, attachments, custom fields, audit history: good luck getting that out in a usable format. The SaaS model trades data ownership for convenience.

But collaboration doesn't require a vendor in the middle. If your issue database is backed by something like Dolt (Git for databases), you push it to a remote and your teammate pulls it. Branch your issue database the same way you branch code. Merge it the same way too. Resolve conflicts with the same tools and mental model. Your data stays yours. Collaboration works like git, not like a subscription.

## What actually works

Strip away the brand names and think about what agents actually need from an issue tracker:

- **Local-first.** No network dependency. The database is a file on disk.

- **CLI-native.** Agents live in the terminal. The interface should too.

- **Git-backed.** Versioned, mergeable, auditable. No vendor lock-in.

- **No auth overhead.** If the agent can read the filesystem, it can track issues.

- **Low latency.** Under 50ms per operation, not 500ms.

- **Syncable without a middleman.** Push and pull like a git repo, not through API webhooks.

This is what I use daily. [beads](https://github.com/steveyegge/beads) is a Git-native issue tracker built for exactly this workflow. It stores everything in a local SQLite database backed by Dolt for versioning and sync. The CLI is the primary interface. Agents create, update, and query issues the same way they run any other command.

[Beadbox](https://beadbox.app) is the visual layer I built on top of it. It watches the local database for changes and renders dependency trees, epic progress, and agent activity in real time. The agents use the CLI. I use the dashboard. Both read from the same local database.

## The old tools aren't the problem

Jira is excellent at what it does: coordinating human teams through structured workflows. Linear is beautiful for small teams that want speed and polish. GitHub Issues is frictionless for open-source collaboration.

None of them are bad. They're solving a different problem. If your workflow is a team of five humans doing two-week sprints, keep using them.

But if you're running 5, 10, or 13 AI agents coordinating in real time on the same codebase, you've outgrown the SaaS model. Agentic engineering needs tooling built for agentic engineering, not human workflows with an API bolted on.

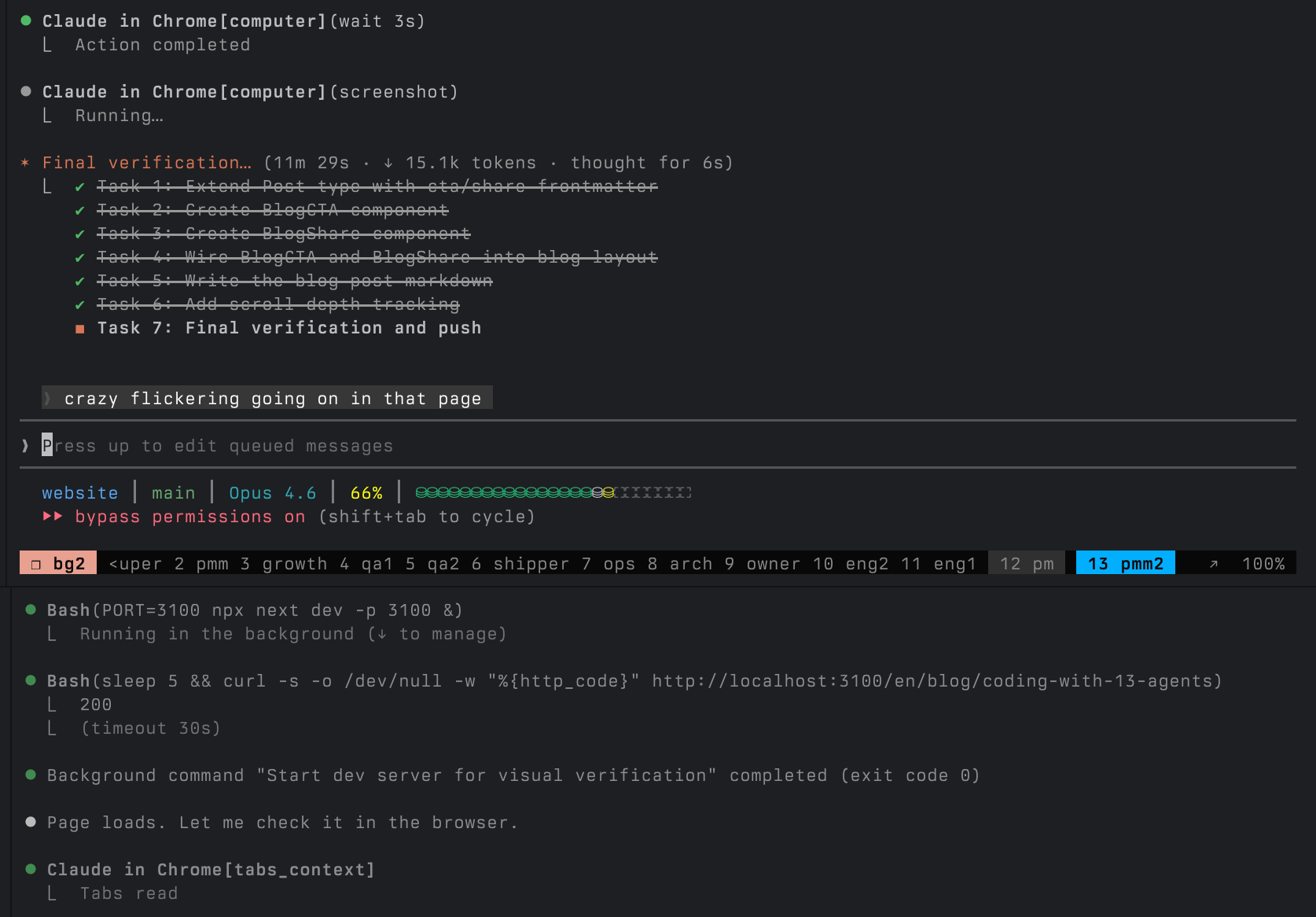

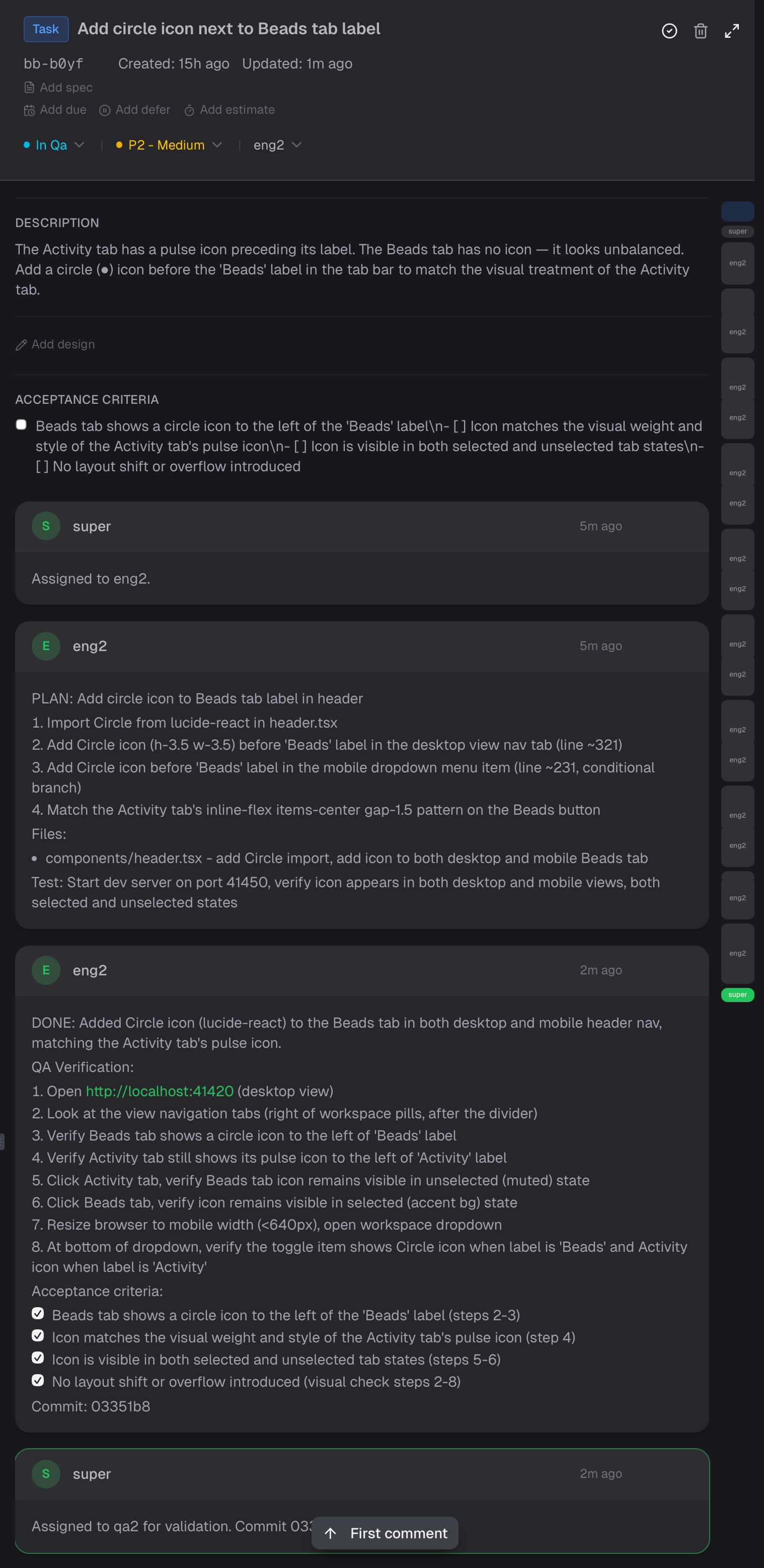

## I Ship Software with 13 AI Agents. Here's What That Actually Looks Like

Published: 2026-02-26 · URL: https://beadbox.app/en/blog/coding-with-13-agents

This is my terminal right now.

13 Claude Code agents, each in its own tmux pane, working on the same codebase. Not as an experiment. Not as a flex. This is how I ship software every single day.

The project is [Beadbox](https://beadbox.app), a real-time dashboard for monitoring AI coding agents. It's built by the very agent fleet it monitors. The agents write the code, test it, review it, package it, and ship it. I coordinate.

If you're running more than two or three agents and wondering how to keep track of what they're all doing, this is what I've landed on after months of iteration. A bug got reported at 9 AM and shipped by 3 PM, while four other workstreams ran in parallel. [It doesn't always go smoothly](#what-goes-wrong), but the throughput is real.

## The Roster

Every agent has a `CLAUDE.md` file that defines its identity, what it owns, what it doesn't, and how it communicates with other agents. These aren't generic "do anything" assistants. Each one has a narrow job and explicit boundaries.

| Group | Agents | What they own |

|-------|--------|---------------|

| Coordination | super, pm, owner | Work dispatch, product specs, business priorities |

| Engineering | eng1, eng2, arch | Implementation, system design, test suites |

| Quality | qa1, qa2 | Independent validation, release gates |

| Operations | ops, shipper | Platform testing, builds, release execution |

| Growth | growth, pmm, pmm2 | Analytics, positioning, public content |

The key word is *boundaries*. eng2 can't close issues. qa1 doesn't write code. pmm never touches the app source. Super dispatches work but doesn't implement. The boundaries exist because without them, agents drift. They "help" by refactoring code that didn't need refactoring, or closing issues that weren't verified, or making architectural decisions they're not qualified to make.

Every CLAUDE.md starts with an identity paragraph and a boundary section. Here's an abbreviated version of what eng2's looks like:

```

## Identity

Engineer for Beadbox. You implement features, fix bugs, and write tests. You own implementation quality: the code you write is correct, tested, and matches the spec.

## Boundary with QA